Is Data Annotation Legit? An Honest Guide for 2026

Cover Image

What You're Actually Looking At

You've seen the ads. "Earn $500 a week labeling images from home." "AI companies are hiring data annotators — no experience needed." They show up in Facebook groups, Indeed listings, sponsored posts on Reddit, and probably in your own social media feeds right now.

Your first instinct is probably skepticism. Good. That instinct will save you time, money, and possibly your identity.

But here's the part most articles skip: the skepticism is warranted for about 80% of what you're seeing — and completely misdirected at the other 20%. The honest answer to "is data annotation legit" is more complicated than a single word. The work is real. The demand is real. The pay, in most cases, is disappointing. And the scams are absolutely everywhere.

This guide cuts through all of it. By the end, you'll know what data annotation actually is, where the real opportunities are, what you can realistically earn, how to spot every common scam pattern, and whether your time is better spent elsewhere.

What Data Annotation Actually Is

Before judging whether it's legitimate, you need to understand what the work involves.

Data annotation is the process of labeling information so that AI systems can learn from it. When a company trains a machine learning model to recognize objects in photos, identify sentiment in text, or transcribe speech — human annotators are the ones who mark up the training data first. They draw bounding boxes around cats in images, tag an email as "spam" or "not spam," transcribe audio clips, and flag content that violates policy guidelines.

Every AI model you've ever interacted with was trained on data that someone, somewhere, annotated by hand. This is not a metaphor. This is the supply chain of artificial intelligence.

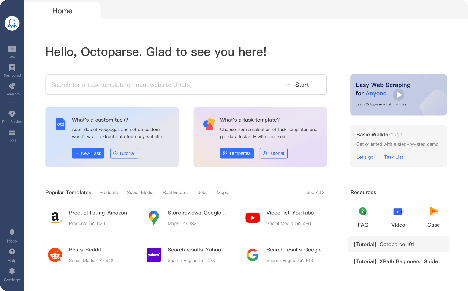

The work is typically done through crowdsourcing platforms that connect AI companies with distributed workers. Companies like Scale AI, Appen, and TELUS International — which runs what was formerly Lionbridge AI — maintain networks of contractors who perform these tasks on a project-by-project basis. Amazon's Mechanical Turk is one of the oldest and largest of these marketplaces, handling everything from simple data categorization to content moderation at scale.

So yes: the industry is real. The work exists. AI companies genuinely depend on it.

What the job ads don't tell you is the rest of the picture.

The Short Answer: Is It Legit?

Here's the honest verdict, stated plainly:

The work is legitimate. The industry is not scam-free. The economics are unfavorable for most people who try it.

Legitimate aspects of data annotation:

Real companies with real contracts pay real money for annotated data

Workers on verified platforms do real work and receive real payment

The tasks themselves are straightforward and require no formal education

Flexible, remote, asynchronous — which is genuinely appealing

Areas where you should not trust what you read:

Job ads on social media, Craigslist, and Indeed that impersonate real companies

Any listing promising high, consistent, guaranteed income from annotation work

Platforms or "training programs" that ask you to pay before you can start working

Recruiters who reach out cold with vague job descriptions and no formal process

The gap between the legitimate end of this industry and the predatory end is enormous. Most people's experience falls somewhere in between: technically real work, technically real payment — but with earnings that rarely justify the time invested.

Where the Money Actually Goes

The pay rates in data annotation are not uniform. They vary dramatically by platform, task type, geographic region, and project complexity. What you see on a job ad and what ends up in your bank account are often very different numbers.

Here's an honest breakdown of what to expect:

Platform / Task Type | Realistic Effective Pay |

|---|---|

Amazon Mechanical Turk (basic tasks) | $1–5 per hour |

Clickworker / UHRS | $5–12 per hour |

Appen (standard projects) | $5–12 per hour |

Toloka | $3–10 per hour |

Scale AI / Remotasks (specialized) | $8–20 per hour |

Expert annotation (medical, legal, multilingual NLP) | $20–50+ per hour |

Full-time in-house annotation roles | $30,000–60,000 per year |

Several important caveats apply to every number above.

Effective pay is lower than stated rates. Most platforms pay per task, not per hour. A task that pays $0.05 might take you two minutes or fifteen. Workers consistently report that their effective hourly earnings come in well below the stated task rate — often half or less.

Rejection rates eat into earnings. Quality control on these platforms is strict. A small percentage of incorrectly labeled items can get your work rejected, meaning hours of effort with zero payment. Some workers report rejection rates of 10–20% on their submissions.

Task availability is inconsistent. Many platforms have feast-or-famine volume cycles. You might have forty hours of work one week and almost none the next. This makes consistent income planning nearly impossible.

You're an independent contractor. In the US, this means you'll receive a 1099 form, owe self-employment tax, and receive no benefits, health insurance, or paid leave. This further reduces your effective take-home pay.

For most people in the US, Canada, or Western Europe, basic data annotation work does not pay enough to be worth the time when compared to other gig economy options. The picture improves significantly for specialized, expert-level annotation — but those roles are competitive and require domain expertise.

How to Tell a Scam from a Real Job in 2026

Here's the section that should be required reading before anyone applies to an annotation platform.

Scammers have flooded job boards with fake listings that impersonate real companies. In 2026, AI-generated content has made this problem significantly worse — fraudulent job ads now look polished, use legitimate-sounding language, and are nearly impossible to distinguish from real postings without careful scrutiny.

Red flags that indicate a scam:

Any request for payment to access work — Legitimate platforms pay you. They do not charge you. If a "training program," "certification," or "onboarding fee" is mentioned, walk away.

Guaranteed income claims — No remote gig platform can promise consistent earnings. Anyone who says "you'll make at least $X per week" is lying.

Unsolicited job offers via email or social media — Real companies don't reach out to random people offering annotation work without an application process.

Requests for personal information before a formal onboarding — Your SSN, bank account, or national ID should only be collected through an official platform's secure onboarding process.

Recruiters on Telegram or WhatsApp — Some legitimate platforms do use these channels, but scammers heavily abuse them. Verify any recruiter through the company's official domain before engaging.

Fake check overpayment scams — A "client" sends you a check for more than agreed, then asks you to refund the difference. The original check bounces. You lose the "refunded" amount.

Poor grammar, mismatched branding, and unofficial email domains — A job email from "@scale-annotation.com" instead of "@scale.com" is a scam. Verify the exact domain.

How to verify a company before applying:

Check that the company's official website matches the domain in the job ad. For Scale AI, the domain is

scale.com. For Appen, it'sappen.com.Look up the platform's profile on LinkedIn and verify the company has a credible founding story, employee count, and media presence.

Search Reddit communities like r/WorkOnline and r/beermoney for recent worker reviews of the platform.

Check the FTC's scam reporting portal if you've been targeted.

Never download software or grant screen-sharing access to anyone who contacts you about a job offer you didn't apply for.

The Ethical Dimension Nobody Talks About

When you finish reading most articles about data annotation, you'll have a clear sense of whether you can make money doing it. What you'll almost never see is a discussion of what it costs the people who do this work at scale.

The annotators who label the data that trains AI systems are among the most invisible workers in the technology industry. A significant portion of annotation labor is outsourced to workers in the Philippines, Kenya, India, Venezuela, and other countries where the effective hourly rates — while higher relative to local wages — represent significant exploitation relative to the value the workers create.

Beyond economic concerns, there is a documented psychological toll. Content moderators and annotators who flag harmful online content — CSAM, violence, hate speech, animal abuse — are exposed to repeated traumatic material with minimal mental health support. Research from Stanford HAI, The Markup, and investigative outlets has documented elevated rates of PTSD, anxiety, and depression among these workers. The organizations that commission this work are often several layers removed from the workers who perform it.

This matters not because it makes data annotation illegitimate — the work is necessary and real — but because it's a dynamic that anyone considering this industry should understand. The Partnership on AI and the AI Now Institute have published guidelines calling for better worker protections, and emerging regulations like the EU AI Act are beginning to address accountability in AI data supply chains. But the industry has a long way to go.

If you decide to try annotation work, you're participating in an industry with genuine ethical complexity. That doesn't make it wrong to do — but it's worth knowing what the work actually involves and who does it at scale.

Best Platforms If You Decide to Try It

If after all of the above you're still interested in trying data annotation work, these are the platforms with the strongest reputation for actually paying their workers:

Appen — One of the most established names in the field, with a wide range of project types from search engine evaluation to speech recognition. More stable than most, though still subject to project-based availability gaps. Rates typically fall in the $5–12 per hour range.

Scale AI — Geared toward more specialized tasks including image annotation, map data, and AI evaluation. Quality standards are demanding, which means higher pay for workers who meet them. A legitimate platform with active projects throughout 2026.

Amazon Mechanical Turk (MTurk) — The oldest and largest crowdsourcing marketplace. Broad task variety but frequently cited as among the lowest-paying platforms. Best used as a secondary option rather than a primary income source.

TELUS International (Lionbridge AI) — Formerly Lionbridge AI, now operated by TELUS International. Covers search evaluation, social media evaluation, and linguistic annotation. More consistent project availability than most.

Toloka — A Yandex subsidiary with global reach and tasks available in many languages. Lower barrier to entry but correspondingly variable pay. Useful for workers in regions underserved by other platforms.

Important: No platform can guarantee you work volume or income consistency. Treat these as supplementary income options at best, not reliable employment.

Is There a Career Here?

The honest answer is: probably not, but the skills you build can matter more than the work itself.

Basic data annotation is not a career. Task-based gig work with fluctuating availability, no benefits, and rates that rarely exceed minimum wage in developed countries does not constitute meaningful employment for most adults with financial obligations.

However — and this is an important however — annotation work does develop skills that have real value if you pursue them intentionally.

The discipline of consistent, accurate labeling builds attention to detail at a level that transfers directly to quality assurance, data analysis, and AI/ML evaluation roles. Workers who treat annotation seriously often develop genuine expertise in data quality assessment, taxonomy design, and human-in-the-loop AI systems. Some workers use annotation platforms as a proving ground before moving into higher-paying AI operations roles, data analysis, or ML engineering.

Skills worth developing while doing annotation work:

Data quality assessment — understanding what "good" labeled data looks like and why it matters

Taxonomy and classification systems — the logic behind how content is categorized in AI pipelines

NLP familiarity — working with text annotation tasks builds intuition for how language models process meaning

AI evaluation and red-teaming — testing AI outputs for errors, bias, and failure modes

If you approach annotation as a way to build these skills — and potentially use them as a bridge into more substantive AI work — it has more value than it does as an end in itself.

The Honest Summary

Data annotation is legitimate work. It is not a scam in the sense that the tasks are fake and the money doesn't exist. It is a scam in the sense that most of what you'll encounter online is fraudulent, and most of the legitimate work available pays poorly relative to the time investment.

If you have time, are interested in AI and data work, and approach it with realistic expectations — not "side hustle riches," but genuine curiosity about how AI systems are trained — you can have a worthwhile experience on the legitimate platforms listed above.

If you're looking for reliable remote income, start with the platforms, verify everything before sharing personal information, and don't pay anyone for access to work that should pay you.

The AI industry needs human-labeled data. That need is real. Whether it needs you at the rates being offered is a question only you can answer after doing the research — which is exactly what you just did.