7 Essential Python Coding Tips for Effective Web Scraping and Data Extraction

Cover Image

Web scraping has become one of those skills that sounds technical but opens up enormous practical possibilities. Whether you're tracking competitor prices, gathering research data, or building a dataset for machine learning, Python remains the go-to language for the job. But there's a gap between "it works" and "it works well."

These tips come from real scraping projects—the kind where things break at 2 AM, where websites change structure without warning, and where performance matters when you're dealing with thousands of pages. Let me share what actually moves the needle.

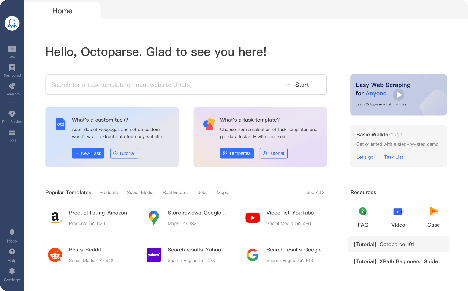

1. Match Your Library to the Job

One of the biggest mistakes I see? Using the wrong tool. BeautifulSoup, Scrapy, and Selenium all scrape websites, but that's where the similarity ends.

BeautifulSoup is the lightweight option. It parses HTML after you fetch it with requests. Great for simple projects where you're pulling data from a few dozen pages. The learning curve is gentle, and it handles malformed HTML gracefully—because let's face it, most websites have some mess in their markup.

Scrapy is the framework approach. It handles crawling, requesting, pipelines, and exporting out of the box. If you're building something that needs to follow links across thousands of pages, Scrapy's architecture pays off. Yes, there's more setup involved, but the scalability is genuine.

Selenium plays a different game entirely. It actually loads a browser and runs JavaScript. That's necessary for sites that build content client-side or require interaction (scrolling, clicking, logging in). The tradeoff is speed—you won't match BeautifulSoup's throughput.

Pick based on the site, not your preference. Static HTML with clean markup? BeautifulSoup. Multi-page crawl at scale? Scrapy. JavaScript-rendered content? Selenium (or consider Playwright as a faster alternative).

2. Tame Dynamic Content

Speaking of JavaScript—some sites simply won't give you what you need without executing code. That's where Selenium becomes relevant, but using it naively leads to slow, flaky scripts.

The key is explicit waits. Don't just time.sleep() and hope content loads. Use WebDriverWait with expected conditions:

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

from selenium.webdriver.common.by import By

driver.get("https://example.com")

element = WebDriverWait(driver, 10).until(

EC.presence_of_element_located((By.CSS_SELECTOR, ".content-loaded"))

)

This waits up to 10 seconds for the element to appear. Much better than guessing at timing.

Infinite scroll is another common pain point. The pattern is straightforward—send PAGE_DOWN keys repeatedly, wait for new content to load, repeat until you've reached the end:

from selenium.webdriver.common.keys import Keys

driver.get("https://example.com")

body = driver.find_element(By.TAG_NAME, "body")

for _ in range(10):

body.send_keys(Keys.END)

time.sleep(2) # Wait for AJAX calls to complete

One more thing: run Selenium in headless mode when you don't need to watch it work. Same functionality, noticeably faster execution.

3. Performance Actually Matters

When you're scraping hundreds or thousands of pages, the difference between optimized and naive code is massive. We're talking hours versus minutes.

Asynchronous requests are the biggest win. While requests waits for each page to download before moving to the next, aiohttp handles multiple pages concurrently:

import aiohttp

import asyncio

async def fetch_page(session, url):

async with session.get(url) as response:

return await response.text()

async def crawl(urls):

async with aiohttp.ClientSession() as session:

tasks = [fetch_page(session, url) for url in urls]

return await asyncio.gather(*tasks)

This can easily be 10x faster than sequential requests.

But there's a catch—sending 100 concurrent requests triggers rate limits almost immediately. Use semaphores to cap concurrency:

semaphore = asyncio.Semaphore(5) # Max 5 simultaneous requests

Memory management matters too, especially with large datasets. Use generators instead of lists when processing items one at a time. Clear variables you're done with. If you're dealing with massive pages, consider streaming the response rather than loading it all into memory.

4. Don't Get Blocked

This is where many scraping projects die. You build something that works beautifully—for about an hour. Then your IP gets flagged, and suddenly every request returns 403.

The first line of defense is looking like a real browser. Set proper headers:

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36",

"Accept-Language": "en-US,en;q=0.9",

}

response = requests.get(url, headers=headers)

Rotate these. Keep a list of common user agents and cycle through them.

Rate limiting is equally important. Even if you technically can send 10 requests per second, the website might not appreciate it:

for url in urls:

response = requests.get(url)

time.sleep(random.uniform(2, 5)) # Random delay between requests

Check robots.txt before scraping. It's not just polite—it tells you what the site expects:

from urllib.robotparser import RobotFileParser

rp = RobotFileParser()

rp.set_url("https://example.com/robots.txt")

rp.read()

if rp.can_fetch("*", target_url):

# Safe to scrape

For serious scraping at scale, you'll need proxies. Services like Bright Data or SmartProxy rotate residential IPs, making detection much harder.

5. Clean Data Early, Not Later

Raw scraped data is rarely ready for analysis. You'll encounter missing values, inconsistent formatting, encoding issues, and duplicates. Handle these early in your pipeline.

Missing values are inevitable:

import pandas as pd

df = pd.DataFrame(scraped_data)

df['price'] = df['price'].fillna(df['price'].median())

df['description'] = df['description'].fillna('')

Text normalization prevents headaches later:

df['title'] = df['title'].str.strip().str.lower() df['category'] = df['category'].str.replace(r'\s+', ' ', regex=True)

Type conversion matters—prices stored as "$49.99" are strings, not numbers:

df['price'] = df['price'].str.replace('$', '').str.replace(',', '').astype(float)

Build these cleaning steps into your pipeline from the start. Retrofitting is always more painful.

6. Storage Strategy Depends on Scale

For a small project—hundreds or a few thousand rows—CSV or JSON works fine. They're readable, portable, and require no setup.

When things grow, databases make more sense. SQLite handles moderate datasets without requiring a database server:

import sqlite3

conn = sqlite3.connect('scraped_data.db')

df.to_sql('products', conn, if_exists='replace', index=False)

For larger operations, PostgreSQL offers better querying, concurrent access, and scalability. Consider cloud storage (AWS S3, Google Cloud Storage) if you're dealing with files or need to share data across systems.

One pattern worth mentioning: timestamp your saves. If you're running ongoing scrapes, you'll want to compare data over time:

import datetime

timestamp = datetime.datetime.now().strftime("%Y%m%d_%H%M%S")

df.to_csv(f'data_{timestamp}.csv')

7. Debugging Happens

Your code will break. Websites change. Networks fail. Elements move. Accept this early.

Logging is non-negotiable:

import logging

logging.basicConfig(

filename='scrape.log',

level=logging.INFO,

format='%(asctime)s - %(levelname)s - %(message)s'

)

try:

response = requests.get(url, timeout=10)

response.raise_for_status()

except requests.RequestException as e:

logging.error(f"Failed to fetch {url}: {e}")

Retry logic handles transient failures:

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

session = requests.Session()

retries = Retry(total=3, backoff_factor=1)

session.mount('http://', HTTPAdapter(max_retries=retries))

Use browser developer tools to inspect elements when selectors break (and they will). Keep your selectors resilient—prefer stable attributes like data-* fields or specific class names rather than generated IDs.

Final Thoughts

These tips cover the arc of a real scraping project: selecting tools, handling complexity, staying fast, staying allowed, cleaning data, storing results, and handling when things go wrong. Start with one or two that match your current challenge, then expand.

The best scraper isn't the fastest—it's the one that keeps running when everyone else's has broken.