Best Data Labeling Tools in 2026: Open Source Takes the Lead

Cover Image

Introduction

If you've ever trained a machine learning model, you already know the uncomfortable truth: your algorithm is only as good as the data it's fed. And before any model can learn anything, someone has to label that data — painstakingly, accurately, at scale. For years, that meant expensive enterprise contracts, opaque pricing, and the quiet hope that your annotation workforce was doing a decent job.

In 2026, that equation is breaking down. Open-source tools like Label Studio and CVAT have quietly matured into production-grade platforms, and the rise of LLM-assisted pre-labeling means teams can build high-quality training datasets for a fraction of what they paid just two years ago. The real story isn't which tool has the most features — it's which tool fits your team's actual constraints.

This guide cuts through the noise. We'll cover the tools that matter, the pricing traps to watch for, and a decision framework so you can pick the right solution for your workflow.

How AI Has Transformed Data Labeling: From Manual to ML-Assisted

The traditional data labeling workflow looked like this: hire annotators (or outsource to a third-party workforce), have them manually draw bounding boxes or tag text spans, and then cross your fingers that the quality was consistent. It worked — barely — but it was slow, expensive, and didn't scale.

Two forces have fundamentally changed that picture.

Active learning is the first. Rather than labeling every data point, active learning pipelines identify the samples a model is most uncertain about and only route those to human annotators. If a model already confidently classifies 80% of your images correctly, you don't need a human labeling those — you need a human focusing on the edge cases that actually improve model performance. This alone can reduce labeling costs by 50–80% depending on your domain.

Foundation model pre-labeling is the second, and arguably bigger shift. LLMs and vision models like CLIP and SAM (Segment Anything Model) can now generate high-quality labels on raw data with minimal human correction. Think of it as a powerful first pass: the model labels 70% of your dataset confidently, and a human reviewer spot-checks and corrects the remaining 30%. It's not fully automated labeling — quality assurance still matters — but it's a dramatic cost and time reduction for teams that know how to use it.

The tools that embrace these workflows — whether natively or through API integrations — are pulling ahead of the ones still stuck in the manual era.

The Top Data Labeling Tools in 2026: A Category-by-Category Breakdown

Not all data labeling tools are created equal, and the "best" choice depends heavily on your use case, team size, and budget. Here's how the landscape breaks down.

Open-Source Tools

Label Studio (labelstud.io) is the most versatile open-source option available. It handles images, video, audio, text, and even time-series data with a highly customizable UI. The self-hosted option means you keep your data on your own infrastructure — critical for teams in healthcare, finance, or any regulated industry. Its ML backend integration lets you connect active learning pipelines, and the API-first design means it's programmable at scale. The catch: the UI can feel clunky compared to polished SaaS products, and the initial setup requires some technical comfort.

CVAT (cvat.org) dominates the computer vision space. Built by Intel, it's the de facto standard for open-source video and image annotation in the CV community. It supports auto-annotation with built-in DL models, interpolation for video tracks, and it's completely free. If you're doing object detection, semantic segmentation, or video analysis, CVAT is worth serious consideration — but its strength is narrow: step outside CV and you'll outgrow it quickly.

Doccano is a lightweight, open-source option purpose-built for NLP text annotation tasks like named entity recognition, sentiment labeling, and text classification. If your team works primarily with text data and wants something simpler than Label Studio, Doccano gets the job done without the overhead.

Enterprise Platforms

Labelbox (labelbox.com) is the enterprise leader for large-scale MLOps teams. Its strength is governance and collaboration at scale: model-assisted labeling, inter-annotator agreement tracking, data curation pipelines, and integrations with every major ML framework. The interface is polished and the workflow tooling is mature. The honest downside is price — Labelbox is built for teams with serious budgets, and smaller teams or startups often find the cost hard to justify.

Scale AI (scale.com) positions itself as the end-to-end AI data platform. Beyond labeling, it offers data curation, synthetic data generation, and managed workforce options. Its managed labeling service — where Scale provides trained annotators for your project — removes the operational headache of hiring and QA-ing your own workforce. This is genuinely useful for companies that need to move fast and don't have annotation operations in-house. The tradeoff is cost: Scale AI is premium-priced, and some practitioners report quality inconsistency on edge-case annotations despite the premium.

Cloud-Native Options

Amazon SageMaker Ground Truth and Google Vertex AI Data Labeling are the choices for teams already committed to AWS or GCP. They offer pay-per-label pricing (no upfront seat cost), native integration with their respective ML platforms, and built-in active learning that routes uncertain annotations to human workers. If you're running your training pipeline on SageMaker or Vertex anyway, these services slot in cleanly. The limitation is lock-in: you pay a premium for the convenience of staying in one cloud ecosystem.

Specialized Tools

Prodigy — from the makers of spaCy — is the tool NLP practitioners tend to reach for. It's scriptable, integrates tightly with spaCy's training loop, and supports active learning out of the box. The model-in-the-loop workflow is elegant: you label, the model trains, the model suggests labels, you correct, repeat. It's a paid tool with no free tier, but for research teams doing serious NLP work, the workflow is worth the cost.

Supervisely, SuperAnnotate, and V7 (Darwin) are cross-category platforms gaining traction for their multi-modal capabilities — handling images, video, documents, and 3D point clouds within a single interface. If your project spans multiple data types, these are worth evaluating.

Open Source Wins: Why Label Studio and CVAT Are Production-Ready

A few years ago, recommending open-source tools for production ML pipelines meant caveats: "they're great for experiments, but you'll need to build your own infrastructure for scale." That caveat no longer holds.

Label Studio's ML backend API means you can wire in any model's inference directly into the annotation UI. Connect a fast API endpoint, and your pre-labeling pipeline runs automatically as annotators work — they correct what the model got wrong instead of starting from scratch. The community has built integrations with PyTorch, TensorFlow, Hugging Face, and most major cloud ML services. Version 1.0 and beyond brought stability improvements that make it realistic to run in production without a dedicated DevOps team babysitting it.

CVAT has similarly matured. Auto-annotation with SAM and YOLO models is built-in — draw a rough box and let the model propagate annotations across a video sequence. For teams processing large video datasets, this is a genuine time-saver that was previously only available in expensive enterprise products.

The economics are the real argument. Running Label Studio or CVAT on a modest cloud VM costs $50–$200 per month depending on your storage and team size — compared to thousands per month for enterprise SaaS. For early-stage ML teams, startups, and research groups, this is the difference between staying within budget and blowing it on annotation tooling.

The honest trade-off remains: open-source tools require more setup time, some technical comfort, and self-managed infrastructure. If your team has no one comfortable with Docker and basic API integration, the enterprise SaaS products will save you time even if they cost more.

The Hidden Cost Factor: What Enterprise Tools Really Charge — and When They Are Worth It

Enterprise pricing is where most teams get surprised. Labelbox and Scale AI don't publish prices on their websites — you have to talk to sales. What that means in practice: costs scale with your usage, and large-scale annotation projects can easily run into five figures per month.

Here's a rough framework for whether the enterprise price premium makes sense:

Scale AI and Labelbox are worth it when: You have a dedicated ML operations team, you're moving fast on a high-value AI product, and the cost is a manageable fraction of your overall development budget. The managed workforce option (where Scale provides trained annotators) is particularly valuable if you don't have annotation operations expertise in-house.

They're not worth it when: You're a startup with a lean budget, a research team exploring a new domain, or a team that has the engineering capacity to self-manage an open-source pipeline. The productivity gains from a polished UI rarely justify 10–20× cost differences over self-hosted alternatives.

One trend worth watching: managed labeling services like Amazon SageMaker Ground Truth Plus and Labeler.ai are emerging as middle-ground options. They offer done-for-you labeling at per-label prices without the full enterprise contract overhead. For teams that need human annotators but don't want Scale AI's price tag, these are increasingly viable.

How to Choose the Right Data Labeling Tool: A Decision Framework

Rather than a ranked list (because no single tool wins across all dimensions), here's how to think about the choice:

1. What type of data are you labeling?

If it's primarily text, Label Studio or Doccano handle it well. For computer vision (images and video), CVAT or Supervisely are purpose-built. For multi-modal or cross-format projects, Labelbox or Scale AI offer more flexibility at the cost of complexity.

2. Do you need self-hosting?

If your data can't leave your infrastructure — healthcare records, proprietary documents, user data under GDPR — self-hosted Label Studio or CVAT are your only viable options among the major tools. Cloud-native services like SageMaker Ground Truth and Labelbox (cloud tier) require data to move through their infrastructure.

3. How large is your team?

Solo researcher or small team with technical skills? Label Studio or CVAT, self-hosted. Growing ML team with annotation operations needs? Scale AI's managed workforce or Labelbox's collaboration features start making sense. Large enterprise with an existing MLOps stack? Evaluate Labelbox for its governance tooling and integration ecosystem.

4. What's your budget?

Budget-constrained or exploring a new domain? Start with Label Studio Community (free). Have AWS or GCP infrastructure already? Ground Truth or Vertex AI are cost-efficient add-ons. Have a serious project budget and need speed? Scale AI or Labelbox pay for themselves in saved engineering time.

5. Do you need LLM/active learning integration?

If you want foundation model pre-labeling or active learning loops, Label Studio's ML backend and Prodigy's tight spaCy integration are the strongest options. Most enterprise tools support model-assisted labeling too, but the open-source options give you more control over the pipeline.

Wrapping Up: Building Quality Training Data on a Budget

The data labeling landscape in 2026 is unrecognizable from five years ago. Open-source tools have closed the capability gap, and LLM-assisted workflows have fundamentally changed the economics of building quality training datasets. You no longer need a massive budget to get started — but you do need a deliberate strategy.

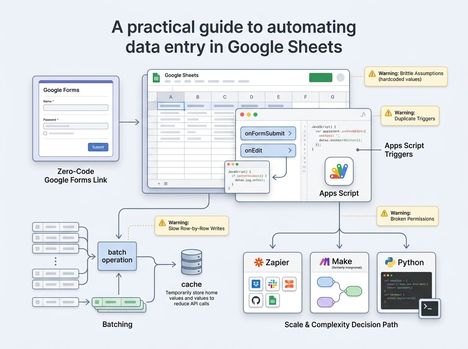

A practical approach for most teams: start with Label Studio for its versatility and self-host it if your data requires it. Use a foundation model (CLIP, SAM, or a fine-tuned LLM) to generate pre-labels on your raw data. Route the model's uncertain predictions to human annotators using active learning, so your reviewers spend time where it actually matters. Iterate on your labeling schema based on model error analysis, not gut feeling.

That hybrid approach — LLM pre-label, human QA, active learning for prioritization — is the workflow that modern ML teams are converging on. It's faster, cheaper, and produces better training data than the old manual-first approach. The tools exist to build it. The only remaining constraint is knowing how to put the pieces together.

Author